Breathing Life Into Mixed Reality Through Sound

Sound is such an important aspect of world building for all types of extended reality experiences. It’s something we often take for granted while watching a movie or playing a game. If you don’t believe me, try muting the audio next time you watch a horror movie!

In a VR experience, where the environment is 100% virtual, sound transports the player to an entirely new world. And because the headphones are built into the headset, there is an opportunity to really immerse the user in that experience.

For mixed reality experiences, sound is even more important because you have the added challenge of designing around the player’s existing physical space. The player is already familiar with their own spatial environment including its dimensions, furniture, and individual objects. Mixed reality adds a virtual layer over that physical environment but in order to make it feel real and believable we, as designers, need to be very sensitive to how these virtual elements (visuals and sound) are integrated.

It’s our job to seamlessly blend the physical and virtual worlds. And because player’s often play in complete silence, sound provides an excellent opportunity to support narrative design and word building.

Collaborating With Envelope Audio

I was fortunate to first collaborate with Fremantle-based studio Envelope Audio a few years ago on an augmented reality mobile app. On that project I was Technical Producer and they provided sound and music production services. I was really impressed with their creativity, collaborative nature, technical guidance, and their obvious technical expertise in audio FX, foley design, music composition and sound production. Their skill and experience is demonstrated on a range of creative projects including film, TV, and immersive experiences.

So while working on my Mycelium mixed reality project it was a no brainer when I reached out and invited them to come on board.

As with any collaborative process between creative professionals who work in different technical fields, one of the initial difficulties is coming to a common understanding of what the project’s overall scope and vision. I addressed that challenge by sharing as many early references as early as possible. This included mood boards, storyboards, narrative overview and inspirations from games, movies and immersive experiences.

I articulated the overall vision, narrative arc and world building that the game was trying to achieve. I also highlighted how audio effects had the practical and important task of supporting design and user experience elements.

We also organised an in person meeting so that they could gets hands on experience with the Meta Quest 3. This provided a great opportunity to chat about hardware technical specifications, production pipeline, the specific assets required, how they would provide these and the overall potential of sound and audio elements. We also discussed how the assets would be created and integrated within our project.

Envelope Audio Studio. Image: Natalie Marinho

Envelope Audio Studio. Image: Natalie Marinho

Technical Integration Into A Mixed Reality Project Built With Unity

We had early conversations about using FMOD given that this was a spatial experience.

FMOD is an audio engine and tool for creating adaptive audio in video games and immersive applications. It's primary strength is that it allows developers to change sound dynamically within a game so that you have full control over music and sound FX.

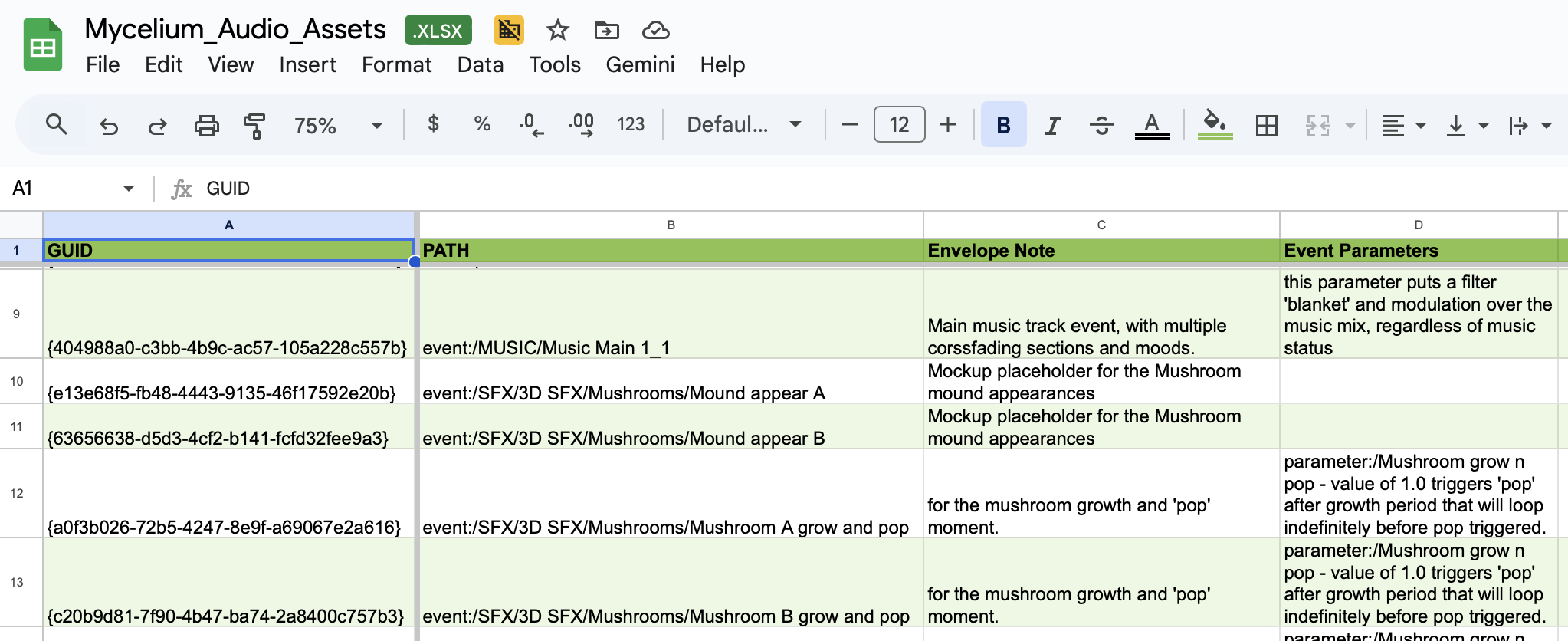

I created an initial spreadsheet listing all our audio requirements and matched supplied storyboards. Envelope then updated the listing with relevant filenames, descriptions and parameters.

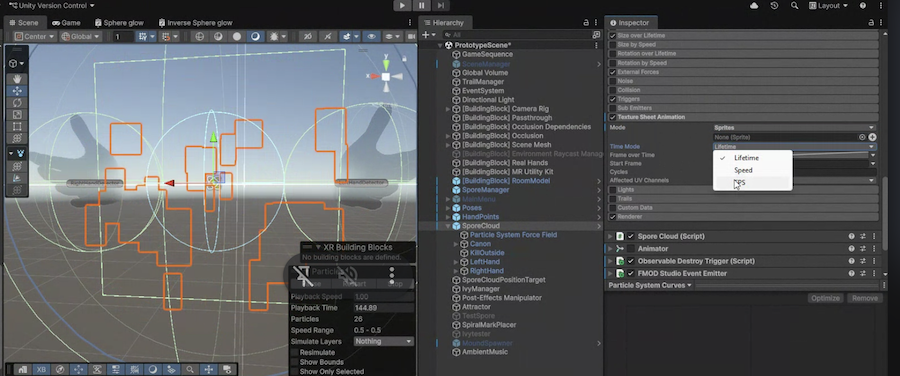

Audio assets (also known as events) can be opened in FMOD Studio via .fspro file or an exported .bank files which are opened directly in Unity. Depending on how these assets were created and related parameters, Unity exposes these fields and allows the developer to manipulate them depending on the scenario providing a lot of creative control. This is great for testing and getting a feel for what works and what doesn’t during gameplay.

How Soundscapes Support Narrative Design and World Building

As Narrative Designer on the project, I knew the soundscape for our project was a critical component of world building but I didn’t know that much about what goes into designing and creating sounds, effects and music.

This project was an amazing experience to learn and collaborate with Envelope Audio as well as later discussions with our team’s Unity Developer and co Game Designer, Kimberley Smith.

Storytelling

I really loved Envelope’s existing Playlist Magic Moments after hearing it while doing some research into soundscapes for the game. After referencing some specific tracks Envelope helped to craft some similar ambient loops for the game. We had a great discussion about the subtlety between melancholic/reflective/meditative atmosphere that could transition into curious/playful/uplifting and how Envelope were able to achieve this through different tempos, instruments and melodies.

I think one of the most important aspects is evaluating when and how to use sound for example:

Aligning ambient/music with world building and narrative arcs

Guiding the player’s attention and direction of focus

Supporting emotional beats

Signalling a shift in scene/mood

Environmental and Character Design

As a cosy game, we really want to create an atmosphere that feels magical, intimate and cosy. The soundscape and music has been an important part of this.

How to create the effect of being in a small space (intimate and cosy) versus expansive (for big climactic scenes).

Creating sounds for our “characters” and actions including the mycelium, mushrooms and spores.

The project also stretched my ability to describe sounds! I had to really stop and consider the exact sound I wanted but also the emotional connection I wanted the player to experience.

User Experience

As Narrative Designer I made a very early design decision to utilise hand tracking only as the main user input in the game. I felt that this was important to establish a direct and intimate connection with the characters and environment. I recognised that this decision would mean that there was no haptic feedback nor tactile feedback from depressing a button on a controller.

Audio feedback reinforces hand interactions in the absence of haptic and controller taps

Guides attention. The user is rarely looking in the direction you intend, so audio can alert them to something else in the space

provide user feedback in the absence of hand controllers and haptics.

There were also a range of technical considerations that I learned about incorporating audio elements into a scene including

How the soundscape would be layered, as multiple sounds/abient/music would be playing simultaneously

Ensuring that all the different sounds didn't compete with each other or sound discordant

Ensuring music was also tonally aligned with audio effects and visa versa

How a sound element could be manipulated/altered and how this could be altered in FMOD/Unity.

It was honestly a great experience to learn about the amazing world of soundscape design and creation. The experience has really helped me reflect on my own creative process while adding new skills for narrative design and world building. I look forward to exploring this further in all my future projects!

Main image credit: Photo by Denisse Leon on Unsplash